Hosted LLMs

Frontier open-weight LLMs, hosted on the NRP

Free access for researchers and educators to a rotating catalog of frontier open-weight models — through a hosted chat interface, an OpenAI-compatible API, and ready-made configs for the major coding CLIs.

Three steps

How to get access

Step 1: Get an NRP account

Sign in once with your institutional or research identity to register a Nautilus account.

Step 2: Request an LLM-enabled namespace

Reach out and we will enable LLM access on your namespace so its members can mint tokens.

Step 3: Generate your LLM token

Use the token page to create a personal API token, then plug it into Open WebUI, Chatbox, or any OpenAI-compatible client.

Models we host

Frontier open-weight models with strong reasoning, coding, and multimodal capabilities. Pick the one that fits your task.

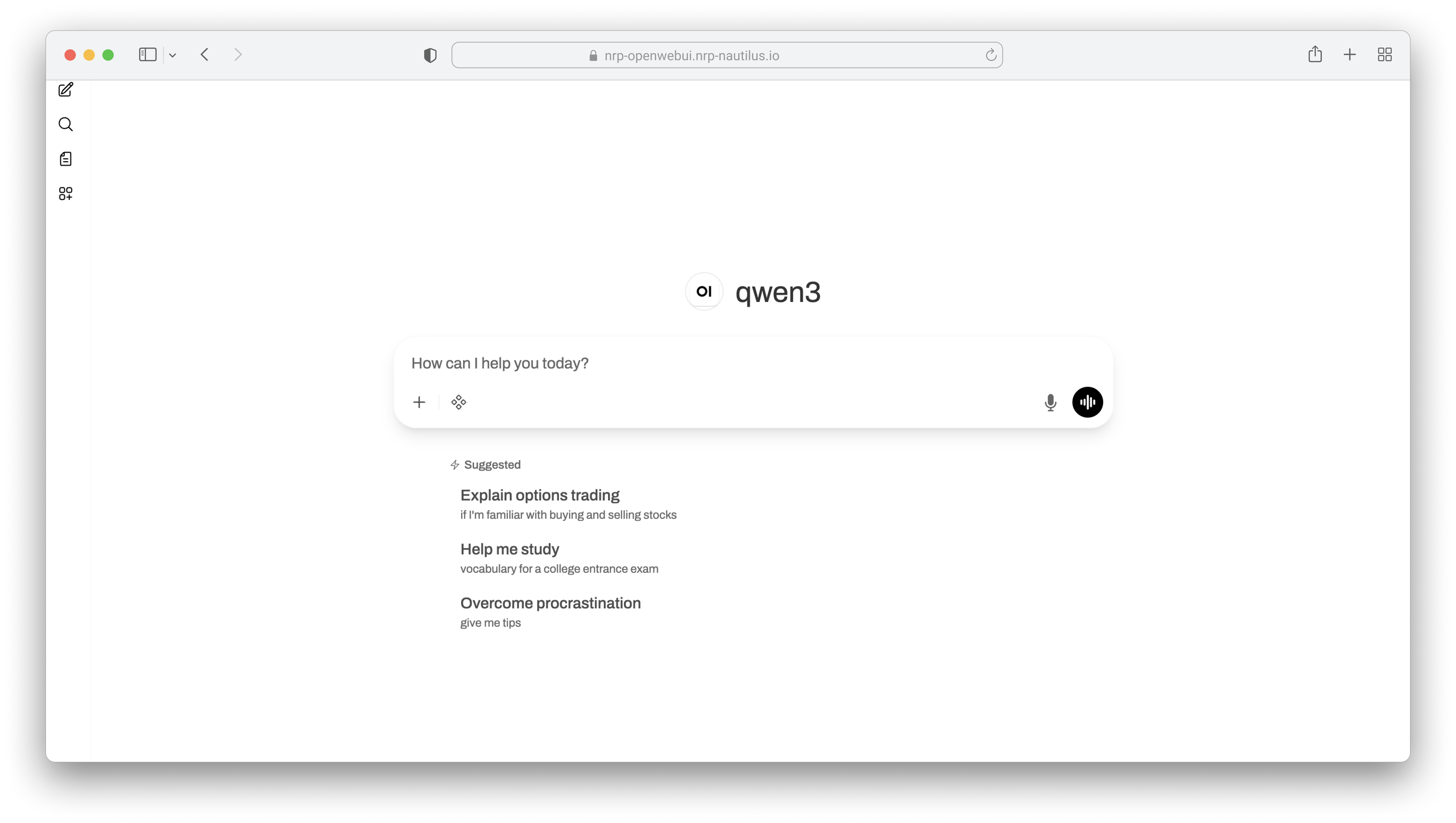

qwen3

Flagship frontier multimodal MoE — Claude/Gemini-level performance. Best for general reasoning, long-context work, and multimodal tasks.

kimi

Moonshot's 1T-parameter frontier coding model with multimodal inputs. Best for agentic coding workflows.

gemma

Google's Gemma 4 — multimodal, optional reasoning, efficient frontier performance. A lighter-weight model that still handles images.

glm-4.7

Zhipu's 358B frontier coding model with official FP8 weights. Strong on code and reasoning tasks.

minimax-m2

Efficient frontier coding model — 230B in native FP8, fits comfortably on four A100s.

olmo

Allen AI's fully open-source 32B instruction model with transparent training data — pick when reproducibility matters.

gpt-oss

OpenAI's open-weights agentic model — tiny GPU footprint, strong tool use, LTS candidate.

Use the client you already love

Hosted chat interfaces, desktop apps, and coding CLIs all work with NRP-hosted LLMs through the OpenAI-compatible endpoint.

How LLMs are being used on the NRP

Researchers, educators, and NRP operators are using hosted LLMs to analyze documents, build software, diagnose workloads, and understand platform usage.

NRP infrastructure is connected to LLM-based tooling so operators can query and diagnose failing nodes using cluster context.

Users can diagnose problematic pods by inspecting logs, events, and related pod details through NRP-assisted workflows.

Researchers can parse and summarize large document collections, then extract signals such as sentiment from sources like securities filings.

Classrooms and research groups can use coding agents with NRP-hosted models to develop, review, and iterate on software projects.

NRP develops MCP servers that plug into the NRP accounting system to help teams understand platform usage and surface operational insights.

Ready to get started?

We will get you onto NRP-hosted LLMs and answer any questions about access, namespaces, and tokens.